What Is the Best AI Agent Stack in 2026? A Comprehensive Framework Comparison

Choosing the right AI agent framework in 2026? This comprehensive comparison covers LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, Claude Agent SDK, Google ADK, and Smolagents—helping you find the best stack for your specific use case.

A common question in AI communities right now goes something like this: "I'm trying to build my first autonomous agent system—what stack should I actually use?" The question surfaces weekly on subreddits like r/AI_Agents, r/LangChain, and r/MachineLearning. After testing twenty-plus frameworks myself and reviewing the current landscape as of March 2026, the answer is more nuanced than most blog posts suggest. There is no single "best" framework. The right choice depends on your team's technical maturity, your persistence requirements, and whether you need agents that collaborate or work independently.

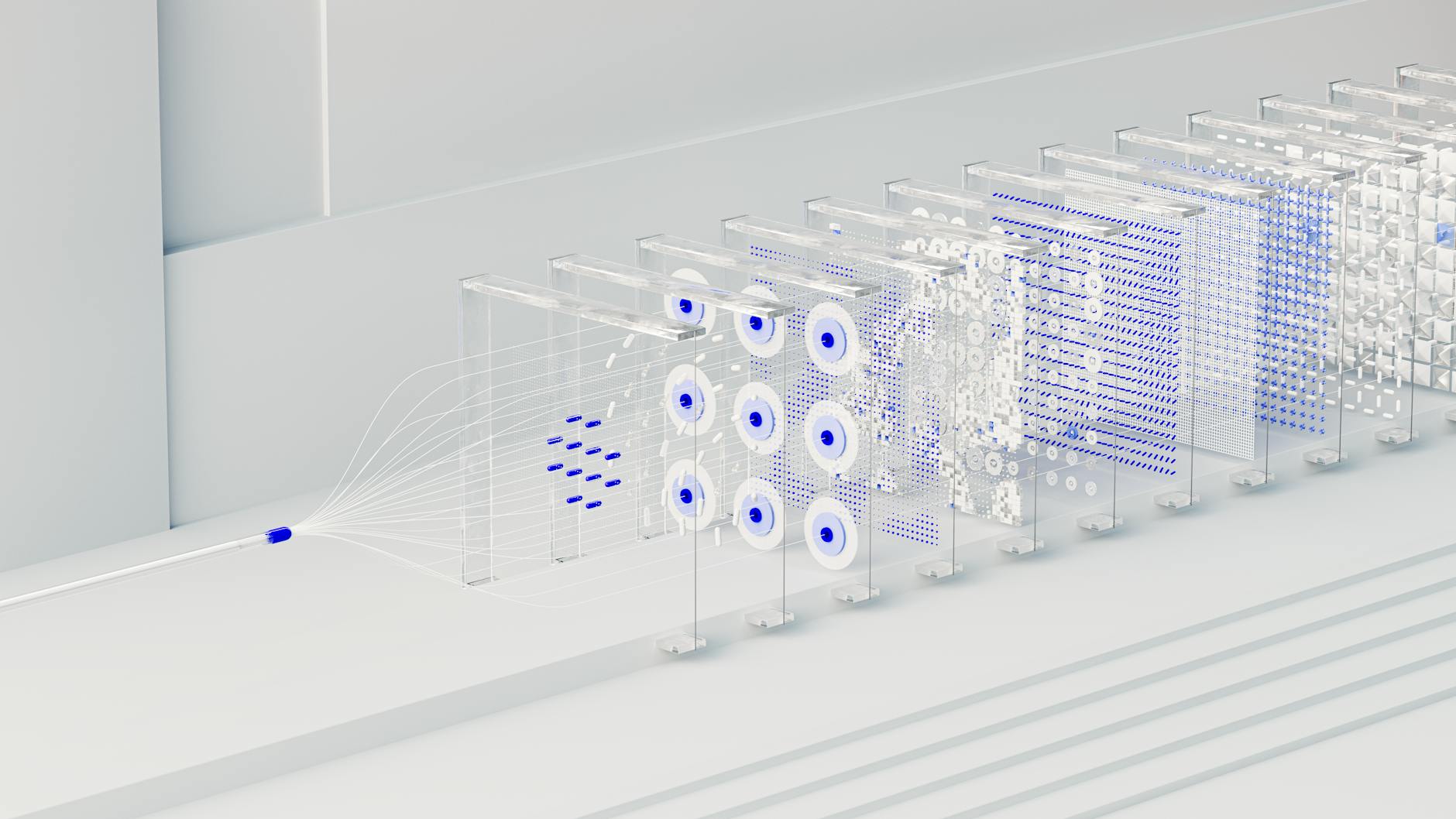

Agentic AI—systems where autonomous agents plan, reason, and coordinate—has moved from experimental demos to production deployments. Companies no longer want chatbots. They want systems that can research competitors, generate reports, review their own output, and file documents without human intervention. Building these systems requires more than just an LLM API key. You need orchestration frameworks that handle state management, error recovery, multi-agent coordination, and human oversight.

The Seven Frameworks Worth Considering

As of March 2026, at least seven production-grade AI agent frameworks are competing for attention in developer stacks. Each takes a fundamentally different approach to the same core problem: how do you build autonomous systems that actually work reliably?

LangGraph: When Reliability Is Non-Negotiable

LangGraph has become the default choice for teams building production agents that must survive failures and maintain complex state. Built on top of LangChain, it models workflows as directed graphs where nodes represent actions and edges represent transitions. Every step gets checkpointed, meaning if your agent crashes mid-task after three hours of work, it resumes exactly where it left off.

The framework's explicit control flow is its superpower. You define precisely how your agent moves between steps—no hidden magic, no unpredictable loops. Native support for human-in-the-loop approval gates means you can insert manual review checkpoints at critical decision points. LangGraph is also genuinely model-agnostic, allowing you to swap between OpenAI, Claude, Gemini, or local models without rewriting your orchestration logic.

The trade-offs are real. LangGraph has a steep learning curve—you need to think in graphs, not linear scripts. Even simple tasks require fifty-plus lines of code. The LangChain dependency brings complexity even if you only want the graph layer. But for enterprise teams building agents that handle financial transactions, legal document review, or healthcare data processing, LangGraph's reliability features justify the verbosity.

CrewAI: The Fastest Path to Working Multi-Agent Systems

CrewAI takes a radically different approach that has made it the most popular framework among product managers and business analysts who need agents without becoming software architects. Instead of graphs, you define a crew of agents with roles, goals, and backstories. They collaborate on tasks like a team of specialists—researchers, writers, editors, analysts working together.

The role-based metaphor feels natural. You define an "AI Research Analyst" with a goal of finding the latest developments, give them a backstory as a senior tech journalist, and let them delegate tasks to a "Technical Writer" agent. The YAML-first configuration means non-engineers can understand and even modify agent behaviors without touching Python code.

CrewAI has the lowest barrier to entry of any major framework. Its active community and growing ecosystem mean most common problems have documented solutions. Built-in delegation lets agents automatically hand off work when they recognize another agent's specialization would produce better results.

However, CrewAI offers less control over execution flow compared to LangGraph. Debugging multi-agent interactions can feel opaque when agents make unexpected delegation decisions. There is performance overhead from the role-playing abstraction layer. Choose CrewAI when you need to prototype multi-agent workflows fast, your agents represent distinct business roles, or your team includes stakeholders who need to understand the architecture without reading code.

AutoGen: The Conversation Pioneer (Now in Maintenance Mode)

Microsoft's AutoGen pioneered the multi-agent conversation pattern that many other frameworks have since adopted. Agents interact through structured dialogues—debates, round-robins, sequential chains. For tasks requiring consensus or iterative refinement between multiple perspectives, AutoGen's conversation-first approach remains elegant.

The framework's group chat capabilities allow multiple agents to debate, vote, and reach consensus. Built-in sandboxed Docker execution for generated code makes AutoGen particularly suited for software engineering agents that write and test code autonomously. Flexible conversation patterns support sequential flows, round-robin discussions, or custom routing logic.

But AutoGen faces an uncertain future. Microsoft shifted focus to the broader "Microsoft Agent Framework" announced in late 2025, placing AutoGen in maintenance mode. While existing deployments continue working, new feature development has slowed. Conversation-based routing can also produce unpredictable behavior for workflows requiring deterministic execution. Token costs accumulate rapidly when multiple GPT-4-class agents chat with each other extensively.

OpenAI Agents SDK: Prototyping at OpenAI Speed

Released in early 2026, the OpenAI Agents SDK targets developers who want to move fast and primarily use OpenAI models. The framework optimizes for rapid prototyping with GPT-4o and o3, offering pre-built patterns for common agent behaviors like web search, file reading, and code execution.

The SDK excels when your use case fits OpenAI's ecosystem. Built-in integration with OpenAI's function calling, retrieval systems, and structured output parsing eliminates integration headaches. The simple API surface means you can have a functioning agent in under twenty lines of code.

The limitation is obvious: model lock-in. The OpenAI Agents SDK is designed for OpenAI models first, with other providers treated as second-class citizens. If your organization requires model diversity for cost optimization or vendor risk mitigation, this framework creates friction.

Claude Agent SDK: Security-First Agent Development

Anthropic's Claude Agent SDK emphasizes security and sandboxing, reflecting the company's broader focus on AI safety. Agents run in restricted environments with fine-grained permission controls. The SDK provides tools for monitoring agent reasoning, intervening when agents pursue concerning trajectories, and auditing decision trails.

For organizations in regulated industries—healthcare, finance, legal—Claude Agent SDK's security features justify the learning curve. Built-in constitutional AI principles help prevent agents from engaging in harmful behaviors even when given ambiguous instructions.

Like OpenAI's offering, the Claude Agent SDK optimizes for its own models. While technically model-agnostic, the deepest integrations and best performance come from using Claude 3.7 Sonnet or Opus.

Google ADK: Multimodal Agents and A2A Protocol

Google's Agent Development Kit (ADK), released in late 2025, focuses on multimodal agents that process text, images, audio, and video within unified workflows. The framework implements the Agent-to-Agent (A2A) protocol Google has been promoting as an industry standard for inter-agent communication.

ADK shines when your agents must process diverse data types. A customer support agent that analyzes screenshots, listens to voice complaints, and reads ticket history can be built more naturally in ADK than frameworks designed primarily for text. The A2A protocol support positions ADK well if Google's standard gains traction, allowing your agents to communicate with agents built on other frameworks.

The Gemini-first design means you'll get optimal performance with Google's models. Developers primarily using OpenAI or Anthropic APIs may find the experience less polished.

Smolagents: Lightweight Code-First Agents

Hugging Face's Smolagents represents the minimalist end of the spectrum. Designed for code agents—systems that write and execute Python to solve problems—Smolagents prioritizes simplicity and transparency over features.

The entire framework fits in a single readable file. There are no hidden abstractions, no complex configuration systems. When agents write code, you see exactly what they wrote. When they fail, debugging is straightforward because there are fewer layers between your prompt and the execution.

Smolagents is ideal for educational purposes, research experiments, and situations where you need to understand precisely how the agent is making decisions. It is less suited for production multi-agent systems requiring sophisticated coordination or persistent state management.

How to Choose: A Decision Framework

Selecting an AI agent framework requires honest assessment of your constraints. Consider these dimensions:

Team Technical Maturity. CrewAI and Smolagents offer the gentlest learning curves. LangGraph requires comfort with graph-based programming and state management concepts. AutoGen and the vendor-specific SDKs fall somewhere in between.

Model Requirements. If you need true model flexibility—running GPT-4 for some tasks, Claude for others, local Llama models for sensitive data—LangGraph and CrewAI provide the cleanest abstractions. If you are standardized on a single provider, their respective SDKs offer tighter integration.

Persistence Needs. For agents running multi-hour tasks that must survive crashes and resumes, LangGraph's checkpointing is unmatched. For short-lived, stateless operations, lighter frameworks suffice.

Multi-Agent Complexity. CrewAI's role-based approach scales naturally to teams of agents with distinct responsibilities. AutoGen's conversation patterns excel when agents must reach consensus. LangGraph handles multi-agent scenarios through explicit graph orchestration.

Human Oversight. LangGraph offers the most mature human-in-the-loop capabilities. Claude Agent SDK provides the strongest safety guardrails. If your agents handle high-stakes decisions, these features matter more than development speed.

The Stack Most Teams Actually Use

Based on production deployments tracked through the first quarter of 2026, a common pattern has emerged. Teams typically combine multiple frameworks rather than betting everything on one:

- LangGraph for core orchestration and state persistence

- CrewAI for defining agent roles and delegation logic

- Vendor SDKs (OpenAI or Claude) for specific high-value tasks where their models excel

- Smolagents or direct API calls for simple code execution tasks

This polyglot approach acknowledges that no single framework solves every problem optimally. The orchestration layer becomes LangGraph managing CrewAI-defined agents, with selective use of specialized tools where they add value.

What the Reddit Threads Get Wrong

Most "what's the best AI agent framework" discussions on Reddit devolve into framework loyalty debates that miss the point. A framework is not a sports team. The better question is: what does your agent need to do, and what failure modes can you tolerate?

Teams routinely over-engineer their first agent system, choosing LangGraph for simple proof-of-concepts that would work fine with CrewAI. Conversely, teams underestimate production requirements, building on lightweight frameworks and discovering months later that they need checkpointing and human oversight they cannot easily add.

The pragmatic approach is starting with the framework that matches your current complexity needs while building abstractions that would allow migration if requirements change. The field is moving fast enough that framework popularity in 2026 may not predict framework maintenance in 2027.

Looking Forward: The Consolidation Prediction

The current fragmentation—seven competing frameworks with overlapping functionality—is unlikely to persist. Industry consolidation typically follows platform maturation, and AI agent frameworks are following familiar patterns.

LangGraph and CrewAI appear positioned as the independent, model-agnostic leaders, similar to how React and Vue coexist in frontend development. Microsoft's broader Agent Framework will likely absorb AutoGen's innovations while offering enterprise integration. The vendor SDKs will remain relevant for organizations deeply committed to single-provider ecosystems.

For developers choosing stacks today, the safest bet is model-agnostic frameworks that provide escape hatches. Building critical systems entirely on OpenAI's SDK creates vendor risk. Building on LangGraph or CrewAI while using OpenAI's models through their APIs preserves optionality.

Conclusion

The best AI agent stack in 2026 is the one that matches your specific constraints—team skills, model requirements, persistence needs, and oversight requirements. LangGraph offers unmatched reliability for complex stateful workflows. CrewAI provides the fastest path to working multi-agent systems. AutoGen pioneered conversation patterns but faces an uncertain future. The vendor SDKs offer tight integration at the cost of flexibility. Smolagents delivers transparency for code-focused use cases.

Most production teams will find themselves combining these tools rather than choosing just one. The framework landscape is still evolving rapidly, so prioritize model-agnostic solutions that preserve your ability to adapt as both the tools and the underlying models continue to improve.

Sources

- DEV Community - Top 7 AI Agent Frameworks in 2026: A Developer's Comparison Guide

- Medium/Javarevisited - I Tried 20+ AI Frameworks: Here are My Top 10 Recommendations for 2026

- BrainCuber - Top 10 AI Agent Frameworks 2026: Complete Comparison

- AlphaMatch AI - Top 7 Agentic AI Frameworks in 2026: LangChain, CrewAI, and Beyond

- LangChain Official Documentation

- Reddit r/LangChain - Comprehensive comparison of every AI agent framework in 2026

- CalmOps - AI Agents: AutoGPT vs LangChain vs CrewAI - Framework Comparison

- LinkedIn - Day 15: AutoGPT, LangChain, and CrewAI: Comparing Popular Agent Frameworks