What Is RAG? A Complete Beginner's Guide to Retrieval-Augmented Generation in 2026

RAG has become the backbone of production AI systems in 2026. This guide explains what Retrieval-Augmented Generation actually does, why it matters, and how you can start using it—no PhD required.

A common question in AI communities keeps popping up: "What exactly is RAG, and why is everyone talking about it?" If you've been exploring AI applications beyond simple ChatGPT prompts, you've likely encountered this acronym. RAG has quietly become the backbone of most production AI systems in 2026, yet explanations often drown readers in technical jargon before they grasp the core concept.

Here's the reality: RAG isn't complicated. It's a straightforward solution to a genuine problem that every business and developer faces when working with large language models. This guide will explain what RAG actually does, why it matters, and how you can start using it—no PhD required.

What Is RAG? The Simple Explanation

RAG stands for Retrieval-Augmented Generation. Break that down: "Retrieval" means finding relevant information. "Augmented" means adding to something. "Generation" refers to how AI creates responses. Put together, RAG is a technique that finds relevant information and feeds it to an AI model before generating an answer.

Think of it like an open-book exam. Instead of relying solely on memorized facts (which might be outdated or incomplete), the student can look up specific information in textbooks before answering. RAG gives AI models access to a "textbook"—your documents, databases, or knowledge base—before they respond to your question.

The concept emerged from research by Facebook AI (now Meta AI) in 2020, but it didn't gain mainstream traction until businesses started hitting the limits of raw LLM capabilities around 2023-2024. By 2026, RAG has become the default architecture for enterprise AI applications.

Why Regular AI Models Fall Short

To understand why RAG matters, you need to grasp what happens when you use a standard AI model like GPT-4, Claude, or Llama without any augmentation:

The Knowledge Cutoff Problem

Every AI model has a training date. GPT-4's knowledge stops at a specific point in time. Ask it about a product released last month, a competitor's latest pricing change, or internal company policies, and it simply cannot know. The model will either confess ignorance or, worse, hallucinate plausible-sounding but completely wrong information.

The Hallucination Problem

Large language models are probabilistic next-word predictors. When they encounter gaps in their knowledge, they don't stop—they improvise. This produces confident-sounding nonsense about your proprietary data, recent events, or niche technical domains. A 2024 study found that commercial LLMs hallucinated between 3% and 27% of the time on technical queries, depending on the domain.

The Private Data Problem

Your company's internal documents, customer records, and proprietary research aren't in any public training dataset. Even if they were, you wouldn't want them there for security reasons. Standard AI models have zero access to your private information unless you provide it.

RAG solves all three problems simultaneously.

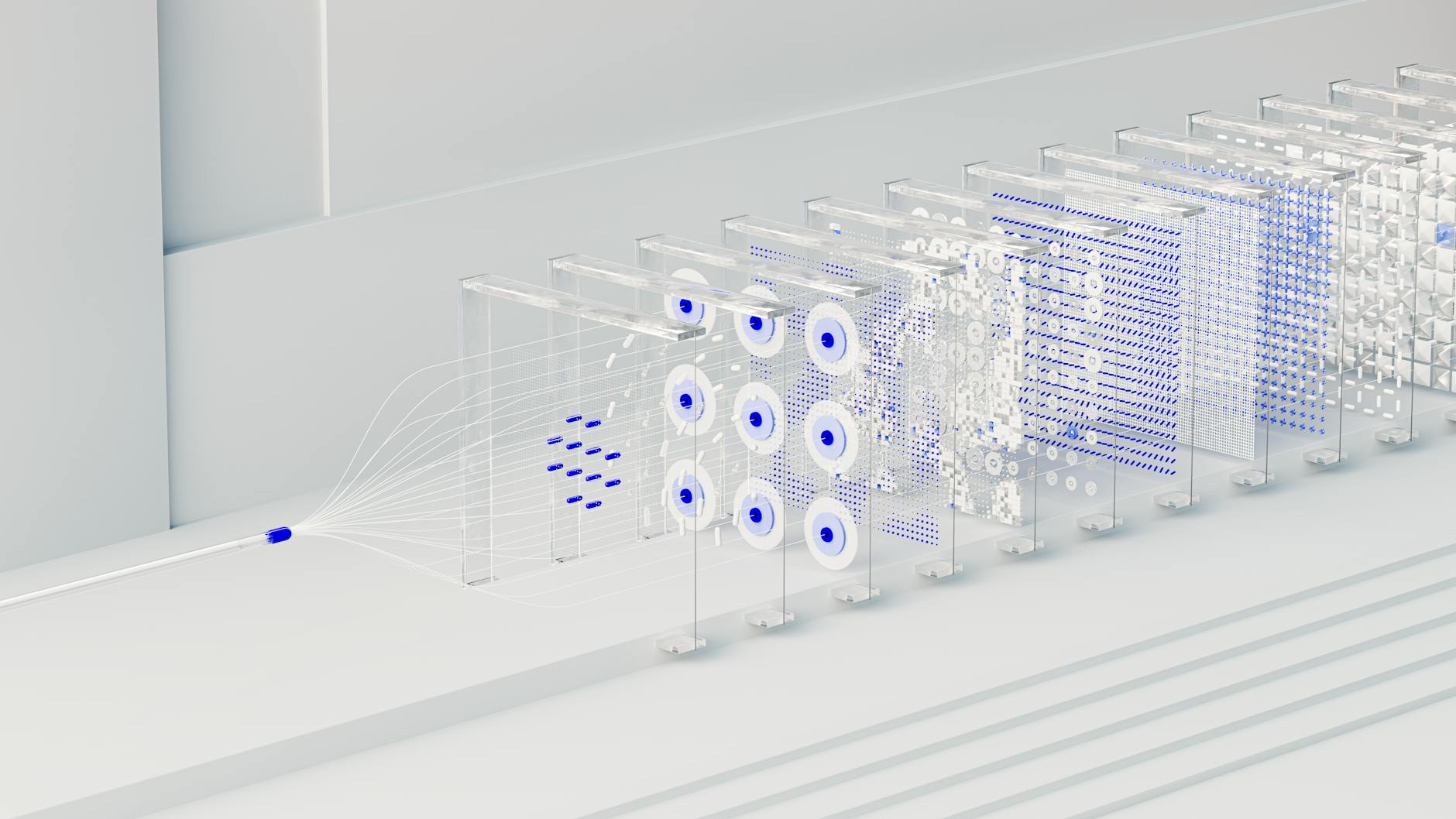

How RAG Works: The Two-Phase Architecture

RAG operates in two distinct phases: preparation and runtime. Understanding this separation clarifies why RAG is both powerful and practical.

Phase 1: Data Preparation (Offline)

This phase happens once, upfront, when you set up your RAG system. Think of it as building a library catalog system:

- Data Loading: Your source materials—PDFs, Word documents, web pages, database records—are ingested into the system. Modern RAG frameworks can handle hundreds of formats.

- Text Chunking: Documents are split into manageable pieces called "chunks." This isn't arbitrary; chunk size affects retrieval quality. Too small, and you lose context. Too large, and you include irrelevant information. Typical chunks range from 200 to 1,000 tokens.

- Embedding Generation: Each chunk is converted into a mathematical representation called an "embedding" using an embedding model (like OpenAI's text-embedding-3, BGE, or E5 models). This transforms text into a vector—a list of numbers that captures semantic meaning.

- Vector Storage: These vectors are stored in a specialized database called a "vector database" or "vector store." Popular options include Pinecone, Weaviate, Chroma, and open-source solutions like FAISS.

Phase 2: Runtime Retrieval (Online)

This phase happens every time someone asks a question:

- Query Embedding: The user's question is converted into a vector using the same embedding model from Phase 1.

- Similarity Search: The system searches the vector database for chunks whose embeddings are mathematically closest to the query embedding. This finds semantically similar content, not just keyword matches.

- Context Assembly: The top-k most relevant chunks are retrieved and assembled into a "context window."

- Augmented Prompt: A prompt is constructed combining the user's question with the retrieved context. It typically looks like: "Based on the following information: [retrieved chunks], please answer: [user question]."

- Response Generation: The LLM generates an answer grounded in the provided context.

The entire retrieval and generation process typically completes in under two seconds.

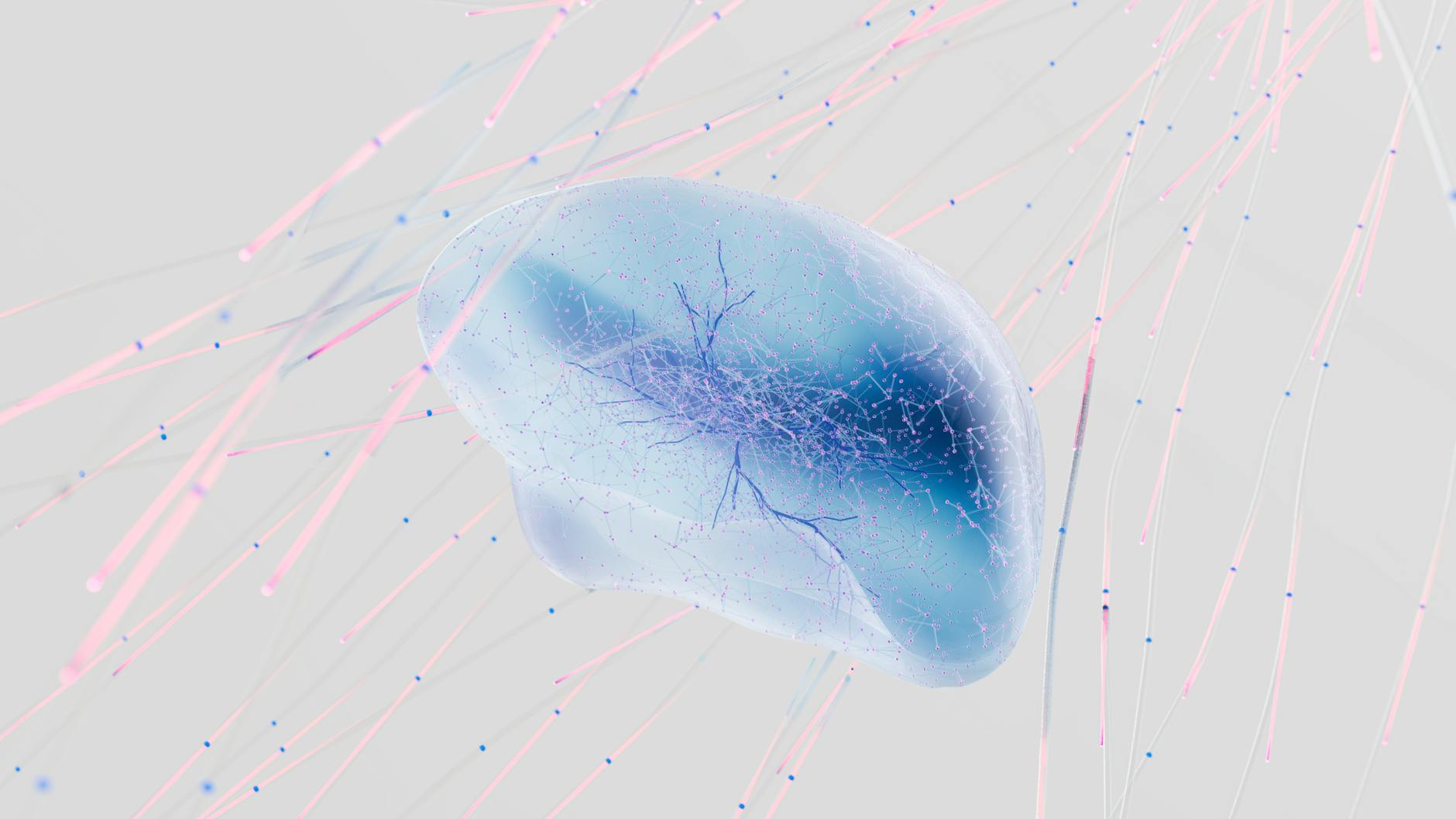

Vector Embeddings: The Secret Sauce

The magic of RAG depends on embeddings, so let's demystify them. An embedding is simply a numerical representation of text that captures meaning rather than just words.

Imagine every concept in language exists as a point in a multi-dimensional space (typically 768 to 1,536 dimensions). Words and sentences with similar meanings cluster together. "King" and "queen" are close together. "Paris" and "France" have a specific spatial relationship. "Python" (the snake) and "Python" (the programming language) occupy different regions.

When you convert a query and a document chunk into embeddings, you can calculate their mathematical similarity using cosine similarity or Euclidean distance. This lets you find relevant documents even when they don't share a single keyword with the query.

For example, a query about "reducing server latency" might retrieve a document discussing "improving database response times" because their embeddings are semantically close—even though they share no common words.

RAG vs. Fine-Tuning: Which Should You Use?

Beginners often confuse RAG with fine-tuning. They're completely different approaches to adapting AI for specific use cases:

| Aspect | RAG | Fine-Tuning |

|---|---|---|

| What changes | External knowledge base | Model weights/parameters |

| Data needed | Raw documents | Training examples (Q&A pairs) |

| Update frequency | Instant—just add documents | Slow—requires retraining |

| Cost | Lower—infrastructure only | Higher—compute for training |

| Transparency | High—can see source documents | Low—model internals opaque |

| Best for | Knowledge bases, Q&A systems | Behavior changes, tone, style |

The practical rule in 2026: start with RAG. It's faster to implement, cheaper to maintain, and easier to debug. Only consider fine-tuning if you need the model to change how it behaves, not just what it knows.

Popular RAG Tools and Frameworks

You don't need to build RAG from scratch. Several mature frameworks handle the heavy lifting:

LangChain

The most widely adopted framework, LangChain provides a modular architecture for building RAG pipelines. It offers pre-built "chains" for common patterns, integrations with dozens of vector databases and LLM providers, and abstractions that make swapping components straightforward. If you're building a production RAG application in Python or JavaScript, LangChain is the safe default choice.

LlamaIndex

Focused specifically on data retrieval and ingestion, LlamaIndex excels at complex document processing. It offers sophisticated indexing strategies, advanced retrieval algorithms, and better out-of-the-box performance for unstructured data. Choose LlamaIndex when document parsing and retrieval quality are your primary concerns.

Vector Databases

Your choice of vector store significantly impacts performance:

- Pinecone: Fully managed, serverless option with excellent performance. Best for teams that want minimal infrastructure overhead.

- Weaviate: Open-source with rich features including hybrid search (combining vector and keyword search). Great for self-hosted deployments.

- Chroma: Developer-friendly and easy to get started with locally. Ideal for prototyping and smaller applications.

- FAISS: Facebook's open-source library for efficient similarity search. Best for research and custom implementations.

Real-World RAG Applications

RAG isn't theoretical—it's powering thousands of production systems right now:

Enterprise Knowledge Bases

Companies like Notion, Slack, and Microsoft have implemented RAG to let users query their internal documents conversationally. Instead of searching through folders, employees ask questions in natural language and receive answers synthesized from their own files.

Customer Support

Modern support chatbots use RAG to access product documentation, troubleshooting guides, and past support tickets. The result is dramatically more accurate responses compared to generic AI that tries to answer from training data alone.

Legal and Medical Research

Professionals in regulated industries use RAG systems that reference specific case law, regulations, or medical literature. The retrieval mechanism provides citations and source verification—critical for professional accountability.

Code Assistants

Tools like GitHub Copilot and Cursor use RAG to reference your specific codebase. When you ask about a function, they retrieve relevant files from your project rather than generating generic examples.

Common RAG Challenges and Solutions

RAG isn't magic. Production implementations face specific challenges that beginners should anticipate:

Chunking Strategy

Poorly sized chunks destroy retrieval quality. Too small, and context gets fragmented. Too large, and irrelevant information dilutes the signal. The solution involves experimentation—try chunk sizes from 200 to 1,000 tokens, with overlaps of 10-20% between chunks to preserve context.

Retrieval Accuracy

Sometimes the right document isn't retrieved because the query and document use different terminology. Solutions include:

- Hybrid search: Combine vector similarity with keyword matching

- Query expansion: Use the LLM to generate multiple query variations

- Re-ranking: Apply a secondary model to re-order initial retrieval results

Context Window Limits

Even with retrieval, you can only feed so much text into an LLM. If your top-k chunks exceed the model's context window, you need compression strategies or iterative retrieval approaches.

Getting Started: Your First RAG Application

Ready to build? Here's a minimal path to your first working RAG system:

- Choose your stack: Start with Python, LangChain or LlamaIndex, and Chroma (for local development) or Pinecone (for production).

- Prepare your documents: Gather 5-10 documents in a supported format (PDF, TXT, DOCX).

- Run the ingestion pipeline: Use your framework's document loader to process files, split them into chunks, generate embeddings, and store in your vector database.

- Build the query interface: Create a simple function that takes user questions, retrieves relevant chunks, constructs an augmented prompt, and calls your LLM.

- Iterate on chunking: Test different chunk sizes and overlap percentages to optimize retrieval quality for your specific documents.

Most developers have a basic RAG system running within a few hours. The real work comes in optimizing retrieval quality and handling edge cases—but the foundation is surprisingly accessible.

The Future of RAG

RAG continues evolving rapidly in 2026. Emerging trends include:

Multimodal RAG extends retrieval to images, audio, and video. Instead of just text chunks, systems retrieve relevant images or video segments alongside written context.

Agentic RAG gives the system autonomy to perform multiple retrieval steps, reformulate queries, and access different data sources based on intermediate findings.

Structured RAG combines vector retrieval with knowledge graphs and structured databases, enabling precise lookups alongside semantic search.

Despite these advances, the fundamental architecture remains unchanged: retrieve relevant information, provide it to the model, generate better answers. That simplicity is why RAG has become foundational to practical AI applications.

Final Thoughts

RAG represents a pragmatic solution to the limitations of large language models. It doesn't require retraining models, managing expensive infrastructure, or collecting massive datasets. Instead, it cleverly augments existing AI capabilities with your own data, creating systems that are simultaneously more accurate, more current, and more trustworthy.

If you're building anything with AI that involves proprietary information, recent data, or factual accuracy requirements, RAG isn't optional—it's essential. The good news? The barrier to entry has never been lower, and the tools have never been better.

Start small, experiment with your own documents, and iterate. That's how every successful RAG application began.