What Are the Best LLM Models to Run with 128GB VRAM in March 2026?

Got 128GB VRAM and wondering which LLMs to run? From Qwen3-72B to DeepSeek-R1 70B, discover the optimal models for high-memory setups in March 2026.

If you've invested in a high-end setup with 128GB of VRAM—or you're eyeing one of the new AMD Strix Halo mini PCs with 128GB unified memory—you're probably wondering which large language models actually justify that hardware. It's a question popping up regularly in AI communities: with this much memory, what are the optimal models to run locally?

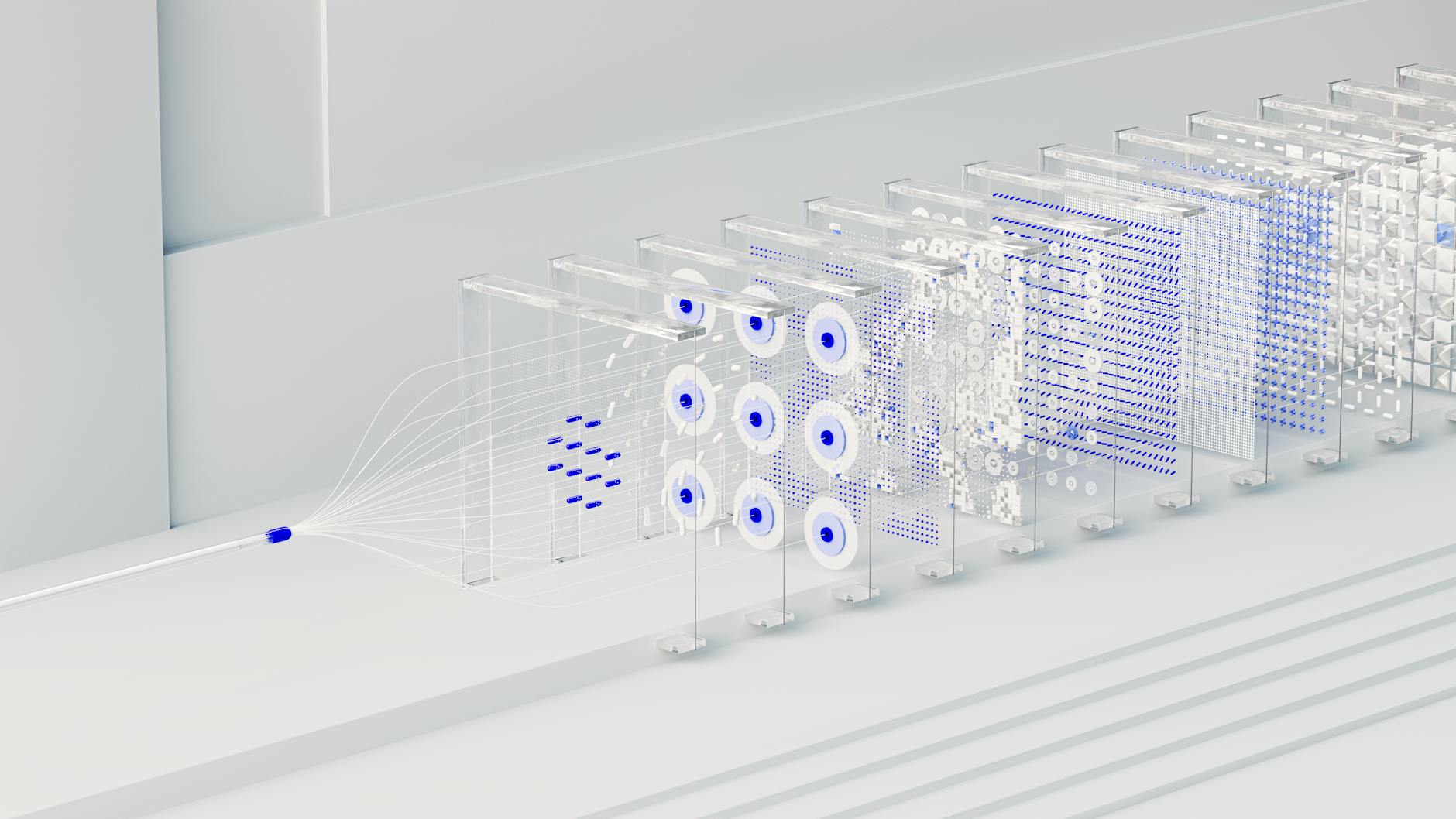

The short answer is that 128GB VRAM sits in a sweet spot. It's enough to run 70-billion-parameter dense models comfortably and even tackle some Mixture of Experts (MoE) models that would choke lesser hardware. But not all models are created equal, and the software stack you choose matters as much as the hardware.

Why 128GB VRAM Changes the Game

Most consumer GPUs top out at 24GB VRAM (RTX 4090) or 48GB (RTX A6000). That limits you to smaller models or aggressive quantization that degrades quality. With 128GB, you can run full-precision or lightly quantized 70B models—the size range where capabilities jump dramatically.

According to VRAM requirement calculations, a 70B parameter model at Q4 quantization needs approximately 42-48GB. At FP16, you're looking at around 140GB—just slightly over what 128GB can handle without offloading. This means 128GB systems can run 70B models at Q4 or Q5 with excellent quality, or smaller models at full precision.

But the real magic happens with MoE architectures. These models only activate a subset of their parameters per token, meaning you get the capability of a much larger model with the memory footprint of a smaller one.

The Best Models for 128GB VRAM Setups

1. Qwen3-72B (Dense)

Alibaba's Qwen3 series has become the darling of the local LLM community, and the 72B dense variant is arguably the best general-purpose model for 128GB systems. Released in mid-2025, Qwen3 brought significant improvements in reasoning, multilingual capabilities, and instruction following.

On a 128GB Strix Halo system, Qwen3-72B runs at approximately 4-5 tokens per second—slow enough to notice, but fast enough for productive work. The model excels at:

- Complex reasoning and analysis

- Code generation across multiple languages

- Multilingual conversations (supporting 29 languages natively)

- Long-context document analysis (up to 128K tokens)

What makes Qwen3 particularly attractive is its efficiency. The Qwen team optimized the architecture specifically for inference, making it faster than comparable models like Llama 3.3 70B while maintaining competitive quality.

2. DeepSeek-R1 70B (Dense)

DeepSeek shocked the AI world in early 2025 with their R1 reasoning model, and the 70B distilled version has become a favorite for local deployment. This model specializes in step-by-step reasoning, making it ideal for:

- Mathematical problem solving

- Logical reasoning puzzles

- Code debugging and explanation

- Complex analysis requiring structured thinking

The DeepSeek-R1 70B requires approximately 48GB at Q4 quantization, leaving plenty of headroom on a 128GB system for context caching or concurrent operations. Performance benchmarks show it outperforming many larger non-reasoning models on tasks requiring logical deduction.

3. Qwen3-30B-A3B (MoE)

Here's where 128GB VRAM really shines. Qwen3's MoE variant activates only 3 billion parameters per token from a 30B total parameter pool. This architecture delivers quality approaching the full 72B model while running significantly faster.

Community benchmarks show Qwen3-30B-A3B achieving 52 tokens per second on Strix Halo hardware—more than 10x faster than the dense 72B variant. The trade-off is slightly lower consistency on complex reasoning tasks, but for most applications, the speed improvement is transformative.

MoE models represent what many researchers believe is the future of efficient LLMs. If you're building applications requiring real-time interaction, this architecture makes 128GB systems genuinely competitive with cloud APIs.

4. Llama 3.3 70B (Dense)

Meta's Llama 3.3 70B remains a solid choice, especially if you prioritize ecosystem compatibility and tooling support. With native integration into platforms like Ollama, LM Studio, and Hugging Face, it's often the easiest model to get running.

Llama 3.3 brings improvements in multilingual support (8 languages) and a 128K token context window. At 128GB VRAM, you can run it at Q4 quantization with excellent performance. The model particularly shines at:

- General conversation and brainstorming

- Summarization tasks

- Creative writing assistance

- Integration with existing Llama-based workflows

Performance Expectations: The Reality Check

Understanding token generation speed is crucial for setting realistic expectations. Here's what you can expect on a 128GB Strix Halo system (Ryzen AI Max+ 395):

| Model | Parameters (Active) | Tokens/Second | VRAM Used |

|---|---|---|---|

| Qwen3-72B | 72B | 4-5 | ~48GB |

| DeepSeek-R1 70B | 70B | 4-5 | ~48GB |

| Qwen3-30B-A3B (MoE) | 30B (3B active) | 52 | ~20GB |

| Llama 3.3 70B | 70B | 4-5 | ~45GB |

The community consensus is that 3-5 tokens per second is the minimum for tolerable conversation. It's slower than cloud APIs, but for private, offline, or cost-sensitive applications, it's entirely workable.

Software Stack Considerations

Your choice of inference engine dramatically impacts performance. For 128GB systems, particularly AMD-based setups, the landscape has evolved significantly in early 2026.

For general-purpose chat, the Vulkan backend with RADV drivers delivers the most stable experience. It's well-tested and provides consistent performance across different model types. However, if you're working with long context windows, Vulkan performance degrades beyond 4,000 tokens.

The ROCm/HIP backend offers higher theoretical performance and maintains speed even at 8,000+ tokens, but requires more setup and can be finicky. Most serious users end up running Fedora 42 or recent Ubuntu with the latest Mesa drivers, using llama.cpp with Vulkan for general use and switching to ROCm for long-context work.

For NVIDIA systems with 128GB (like dual A6000s or an A100), CUDA remains the gold standard—just works, excellent performance, broad software support.

What About Even Larger Models?

You might wonder if 128GB can handle the new crop of 100B+ or 400B models. The answer is complicated. A 120B parameter model at Q4 requires approximately 72GB—technically fitting in 128GB, but leaving little room for context or overhead. Performance drops to 2-3 tokens per second, which becomes frustrating for interactive use.

For models like DeepSeek V3.2 (350B total parameters), you'll need quantization to Q2 or aggressive pruning, which significantly degrades quality. These models really want 192GB+ VRAM to shine.

The sweet spot remains 70B dense models and MoE architectures in the 30-70B range. This is where 128GB delivers maximum value—enough quality for serious work, with acceptable speed.

Real-World Use Cases

So what can you actually do with this setup?

Local Coding Assistant: Running a 70B code model like CodeLlama or DeepSeek Coder works well enough for code completion and explanation. The response latency is noticeable compared to GitHub Copilot, but you get privacy and zero subscription costs.

RAG Applications: The combination of large memory and reasonable inference speed makes 128GB systems genuinely practical for retrieval-augmented generation. You can load a substantial model, keep a vector database in memory, and process queries without cloud API latency or costs.

Document Analysis: With 128K context windows now standard, you can analyze entire research papers or legal documents in one shot. The 128GB VRAM accommodates both the model and the full context without offloading.

Batch Processing: When speed matters less than cost, 128GB systems excel at overnight batch jobs—summarizing document collections, processing survey responses, or generating training data.

The Verdict

For 128GB VRAM in March 2026, the recommendations are clear:

- Best overall: Qwen3-72B for capability, Qwen3-30B-A3B for speed

- Best for reasoning: DeepSeek-R1 70B

- Best for ease of use: Llama 3.3 70B

- Future-proof choice: Any MoE architecture—these are becoming the standard

The hardware has reached a tipping point where local 70B models are genuinely practical for daily work, not just experiments. Whether you're privacy-conscious, cost-sensitive, or simply want offline capability, a 128GB setup delivers capabilities that were cloud-only just a year ago.

The question isn't whether this approach works—it clearly does. The question is whether the performance trade-offs align with your specific needs. For many developers and researchers, the answer is increasingly yes.