How Does an AI Language Model Actually Generate Text? From Tokens to Output Explained

Ever wonder what actually happens inside ChatGPT when you type a prompt? This deep dive explains tokenization, embeddings, self-attention, and autoregressive generation—the complete journey from input text to AI output.

A common question in AI communities—on Reddit's r/artificial, Stack Overflow, and countless tech forums—is deceptively simple: How does an AI language model actually generate text? Not the high-level "it predicts the next word" explanation, but the real mechanics. What happens inside those billions of parameters when you type a prompt?

The answer reveals one of the most elegant architectural designs in modern computing. Understanding it won't just satisfy curiosity—it will change how you interact with ChatGPT, Claude, and every other LLM you use.

The Core Concept: Autoregressive Generation

Large language models (LLMs) like GPT-4 and Claude operate on a principle called autoregressive generation. The term sounds intimidating, but it simply means "predicting the next thing based on everything that came before."

When you type "The cat sat on the," your brain immediately suggests completions: mat, floor, chair. You don't consider every word in the dictionary—you narrow it down based on context. Language models do the same thing, except they calculate mathematical probabilities for every possible token in their vocabulary (often 100,000+ options) and select one.

The mathematical reality looks like this:

- Probability of "The" (starting the sentence)

- Multiplied by probability of "cat" given "The"

- Multiplied by probability of "sat" given "The cat"

- Multiplied by probability of "on" given "The cat sat"

- And so on...

Each prediction depends entirely on previous tokens. No peeking ahead. No second chances. That constraint—predicting only what comes next—is what makes these models "autoregressive."

Stage 1: Tokenization—Breaking Text Into Pieces

Before any neural network processing occurs, your input undergoes tokenization. This is where raw text transforms into discrete units the model can process.

Tokenizers don't simply split on spaces. They use sophisticated algorithms (typically Byte Pair Encoding or its variants) to break text into subword units. Consider this example using OpenAI's tokenizer:

The phrase "How do transformers work?" becomes five tokens: [4438, 656, 43822, 990, 30].

Notice that "transformers" becomes a single token while shorter words might combine. This matters practically: when an API advertises a "128K context window," it means 128,000 tokens—not words or characters. A single word might be one token or several depending on its frequency in the training data. Common words get their own tokens; rare words get broken into pieces.

Stage 2: Embeddings—Turning Tokens Into Vectors

Once tokenized, each token ID gets converted into a high-dimensional vector through an embedding table. Think of this as a massive lookup dictionary where each token maps to a unique point in mathematical space—typically 768 to 12,288 dimensions depending on the model size.

Here's where it gets interesting: the embedding vectors aren't random. Through training, semantically similar tokens cluster together. "King" and "emperor" occupy nearby regions. "Happy" and "joyful" share proximity. The model learns these relationships by processing billions of text examples during training.

At this stage, each embedding carries semantic information about what a token means—but crucially, it contains no information about where that token appears in the sequence. That comes next.

Stage 3: Positional Encoding—Understanding Order

Transformers process all tokens simultaneously rather than sequentially like older RNN architectures. This parallelism enables massive speedups but creates a problem: how does the model know that "dog bites man" differs from "man bites dog"?

Positional encoding solves this by injecting position information into each embedding. The original Transformer paper used sinusoidal functions—sine and cosine waves at different frequencies—to create unique positional signatures. Modern models often use learned positional embeddings instead, but the principle remains: each position in the sequence gets a distinct mathematical fingerprint.

The formula looks conceptually like this:

final_embedding = token_embedding + positional_encoding(position)

Now each vector encodes both what the token is and where it sits in the sequence.

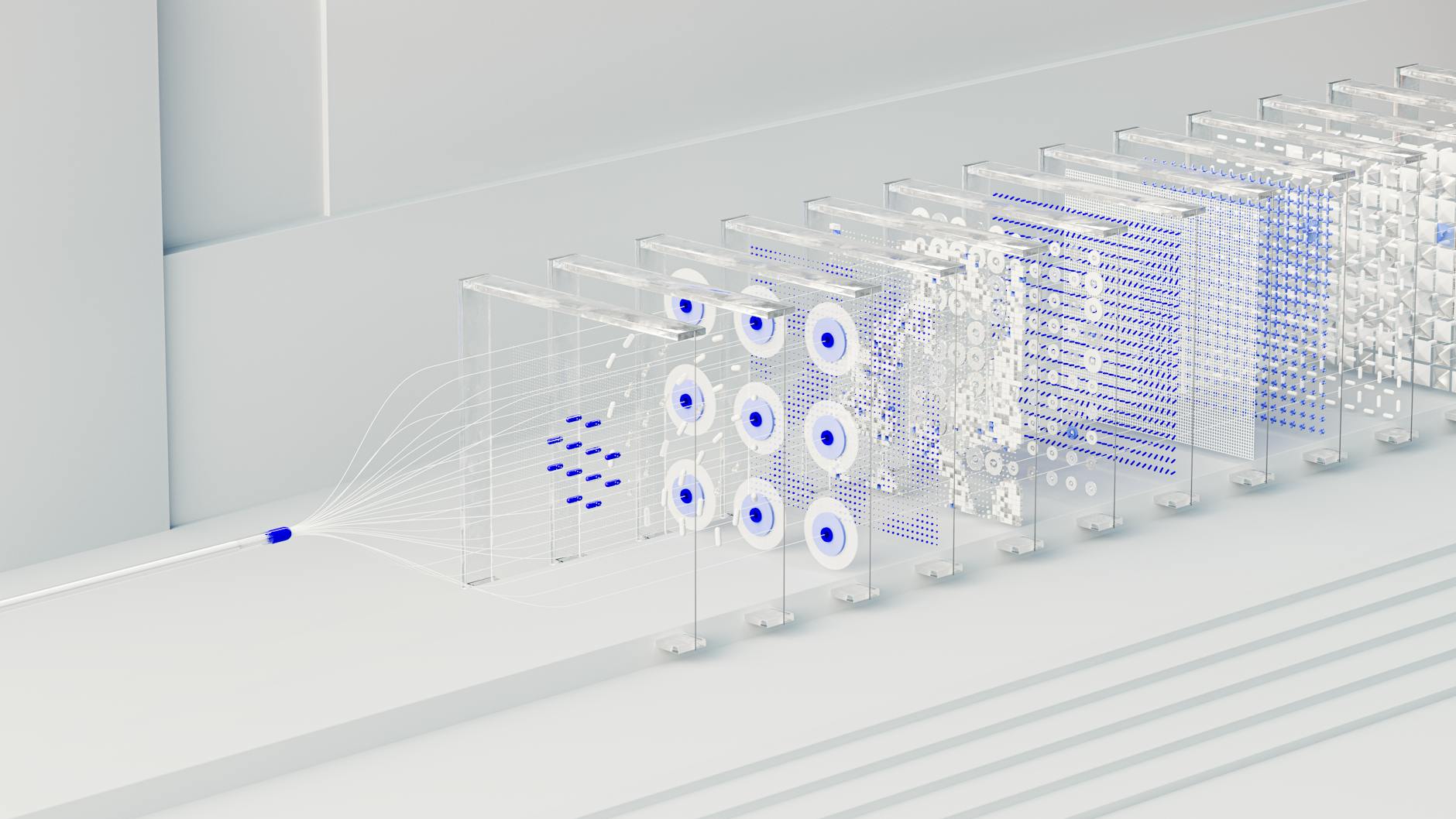

Stage 4: The Transformer Layers—Where the Magic Happens

This is where the heavy lifting occurs. Modern LLMs stack dozens of transformer layers—each containing the same fundamental components but learning increasingly abstract representations as information flows upward.

Self-Attention: The Revolutionary Mechanism

The heart of every transformer layer is multi-headed self-attention. This mechanism allows each token to "attend to" (focus on) every other token in the sequence, determining which relationships matter most.

Consider the sentence: "The cat sat on the mat because it was comfortable." When processing the word "it," humans instantly understand it refers to "the mat" not "the cat." Self-attention gives models this same capability by creating weighted connections between tokens.

The mechanism uses three learned projections for each token:

- Query (Q): What am I looking for?

- Key (K): What do I offer?

- Value (V): What information do I carry?

The attention score between tokens calculates as the dot product of Query and Key vectors. Higher scores mean stronger relevance. These scores get normalized through softmax (converting them to probabilities) and then used to weight the Value vectors. The result: each token emerges with a context-aware representation incorporating information from relevant tokens across the entire sequence.

Multi-headed attention runs this process multiple times in parallel—each "head" potentially learning different relationship types. One head might capture syntactic relationships (subject-verb agreement). Another might track coreference (which pronoun refers to which noun). Yet another might identify semantic similarities (synonyms, antonyms). GPT-4 reportedly uses 96 attention heads per layer.

Feed-Forward Networks: Adding Non-Linearity

After attention, each token representation passes through a feed-forward neural network—typically two linear transformations with a non-linear activation function (like GELU) between them. These networks apply the same transformation to every token position independently, adding computational capacity without mixing information across positions.

Think of attention as "gathering context from other tokens" and feed-forward layers as "thinking independently about what I've gathered." Both are necessary.

Layer Normalization and Residual Connections

Between each component, transformer layers use residual connections (adding the input to the output) and layer normalization (standardizing values). These techniques prevent the vanishing gradient problem that plagued earlier deep networks and ensure stable training at massive scale.

Stage 5: The Language Head—Predicting Next Tokens

After passing through all transformer layers—GPT-4 has 96 of them—the final representations reach the language modeling head. This component projects the high-dimensional token representations back down to vocabulary size, creating a probability distribution over every possible next token.

Here's the critical step: the model applies softmax to these raw scores, converting them into probabilities that sum to 1.0. If the vocabulary contains 100,000 tokens, the output is a 100,000-dimensional probability vector where each entry represents how likely that token is to come next.

The generation process typically selects the highest probability token (greedy decoding) or samples from the distribution based on a "temperature" parameter. Higher temperatures increase randomness, producing more creative but potentially less coherent outputs.

The Generation Loop: One Token at a Time

Here's where autoregressive generation becomes concrete. The model doesn't generate entire paragraphs at once—it builds responses token by token:

- Input processing: Your prompt gets tokenized, embedded, and processed through all transformer layers

- First prediction: The model outputs probabilities and selects the most likely next token

- Append and repeat: That token gets added to the input sequence

- Full reprocessing: The entire updated sequence runs through the model again

- Next prediction: New probabilities emerge based on the extended context

- Continue until: An end-of-sequence token generates or maximum length reached

For a 500-token response, this loop runs 500 times. Each iteration requires a complete forward pass through the entire transformer stack. This sequential dependency explains why LLMs feel slower than simpler models—you can't parallelize token generation.

The Masked Attention Trick

Wait—you might wonder how a model that sees all tokens simultaneously manages to only predict future tokens during training. Doesn't it cheat by looking ahead?

Transformers use causal masking to prevent this. During training and inference, the attention mechanism gets blocked from accessing future positions. When predicting the 10th token, the model can only attend to positions 1-9. This "look-ahead mask" ensures the autoregressive property holds—each prediction depends only on previous tokens.

Without masking, the model would achieve perfect training accuracy by simply copying the next token, learning nothing useful. The mask forces genuine prediction based on context.

Why This Architecture Dominates

The transformer design—introduced in the landmark 2017 paper "Attention Is All You Need" by Vaswani et al.—won for several reasons:

Parallelization: Unlike RNNs that process tokens sequentially, transformers handle entire sequences simultaneously during training. This enables massive GPU parallelism and dramatically faster training.

Long-range dependencies: Attention mechanisms can directly connect distant tokens. In a 4,000-token document, the first and last words can attend to each other with equal ease—something RNNs struggled with due to vanishing gradients.

Scalability: Transformer performance improves predictably with more parameters and data. This "scaling law" drove the race from GPT-2's 1.5 billion parameters to GPT-4's estimated 1.8 trillion.

Transfer learning: Pre-trained transformers can be fine-tuned for specific tasks with relatively little additional data, making them economically practical for diverse applications.

The Hidden Costs Nobody Talks About

For all its elegance, autoregressive generation has serious limitations that affect every interaction you have with LLMs:

No revision capability: Once a token generates, it's locked in. If the model starts with a factual error—claiming "Paris is the capital of Germany"—it must build the rest of the response on that foundation. It cannot go back and fix mistakes. This explains why you sometimes see models double down on errors rather than correcting them.

Exposure bias: During training, models see perfect human-written text. During inference, they see their own predictions—which are often wrong. This mismatch causes error compounding. One bad prediction makes the next more likely to be wrong.

Latency constraints: Because tokens generate sequentially, a 1,000-token response requires 1,000 forward passes. Even on the fastest hardware, this creates perceptible delays. You can't stream tokens faster than the model can compute them.

No global coherence checking: The model never sees its complete output before you do. It can't verify that the conclusion contradicts the introduction or that the tone shifted mid-paragraph. It's like writing a novel one sentence at a time without ever reading the whole thing.

What's Coming After Transformers?

The dominance of autoregressive transformers isn't guaranteed forever. Research in 2025-2026 is exploring alternatives:

Diffusion models for text: Inspired by image generation, these models create text in multiple passes—starting with a noisy draft and refining it. Models like LLaDA demonstrate that non-autoregressive approaches can achieve competitive coherence while allowing revision.

Hybrid architectures: Future systems might combine approaches—autoregressive generation for initial drafting followed by separate editing passes for logic, grammar, and consistency checking.

Test-time compute scaling: Rather than training larger models, newer approaches like OpenAI's o1 and DeepSeek's R1 use "chain of thought" reasoning at inference time—generating and evaluating multiple reasoning paths before producing final outputs. This trades latency for accuracy without requiring bigger models.

What This Means for Your Prompts

Understanding token-level generation changes how you should interact with LLMs:

Lead with context: The more relevant context you provide upfront, the better the model can predict appropriate continuations. Don't make the model guess what you want.

Expect cascading errors: If the model starts wrong, stop and correct it. It cannot self-correct mid-generation the way humans can.

Understand token limits: When approaching context window limits, the model may abruptly degrade as it struggles to maintain coherence. Budget your tokens wisely.

Temperature matters: Lower temperatures (0.0-0.3) produce more deterministic, factual outputs. Higher temperatures (0.7-1.0) increase creativity but also hallucination risk. Choose based on your task.

The Bottom Line

Language models generate text through a remarkably elegant process: tokenization converts text to numbers, embeddings encode meaning in high-dimensional space, transformer layers enrich representations through self-attention, and a language head predicts probabilities for the next token. Repeat thousands of times.

Yet this simple loop—predicting one token at a time—produces outputs so coherent that millions of people use these systems daily for writing, coding, analysis, and creative work. The emergent capabilities arise not from any single component but from the interaction of billions of parameters trained on trillions of tokens.

As you type your next prompt, remember: somewhere in a data center, a transformer is calculating probabilities, selecting tokens, and building a response—one piece at a time. Understanding that process doesn't diminish the magic. It helps you wield it more effectively.

Sources

- Vaswani, A., et al. (2017). "Attention Is All You Need." Advances in Neural Information Processing Systems.

- Machine Learning Mastery. (2025). "The Journey of a Token: What Really Happens Inside a Transformer."

- EHGA. (2026). "How Autoregressive Generation Works in Large Language Models: Step-by-Step Token Production."

- ML Journey. (2025). "Transformer Architecture Explained for Beginners."

- OpenAI. GPT-2 and GPT-3 Technical Documentation and GitHub Repositories.